In the first blog of this two-part series, we built a cool pair of NeoPixel glasses that are powered by your CodeX. Now that the hardware is complete, we get to the fun part: the software! In this blog, we will code up some cool, flashy animations for the CodeX glasses.

NeoPixels and the CodeX

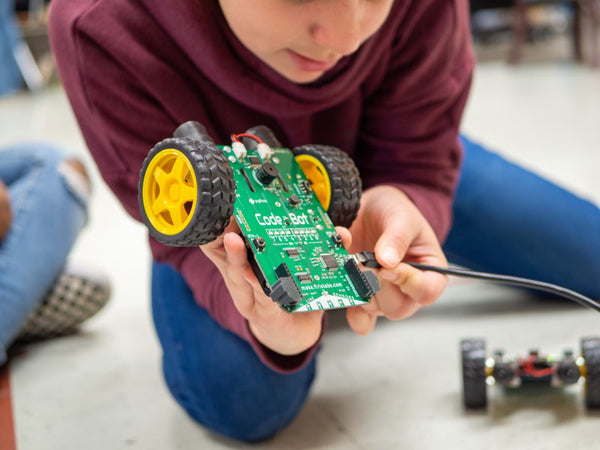

Your CodeX has four NeoPixels built-in. The CodeX python library has built-in support for these LEDs, and you can use the same library to control your own added pixels. The built-in NeoPixels are those little white boxes labeled “RGB” in the upper left and upper right corners of the CodeX.

Each NeoPixel contains three LEDs (red, green, and blue) and a driver chip that reads serial data and lights them up. Each pixel has a single input wire. You control the pixels by sending 24 bits of serial data on this wire. That’s 8 bits (one byte) for red, 8 bits for green, and 8 for blue. The higher the values you send, the brighter the individual LEDs will be. The value 0 is off while a value of 255 is full on. Thus a 24 bit value of (255, 255, 255) is full-red plus full-green plus full-blue. which makes white. Warning: NeoPixels can get very bright!

Each pixel also has a single output wire that can be connected to the input wire of another pixel. When the first pixel has read its 24 bits of data, it passes any subsequent bits to the next pixel. When this second pixel has read its data, it passes the remaining bits on to the next pixel and so on down the line.

Let’s say you have a chain of 20 pixels you want to light up. First, you build a list of 20 Red/Green/Blue values – one set of three values for each pixel. Now you grab the input line of the the first pixel and rapidly stuff all three bytes down the wire one bit at a time. Next, you get the 2nd pixel’s values from your list and stuff those bits down the wire -- followed by the 3rd pixel’s RGB values and so on until you have crammed all 20 sets of values down that one tiny wire.

The bits go out quickly, and the timing is critical. Fortunately, the CodeX library does all the timing for you. All you have to do is make a regular old list of values in Python and pass it to the library for processing.

Want to learn more about NeoPixels? Check out the Adafruit guide to all-things NeoPixel.

External Chains

You can attach a chain of neopixels to each of the four peripheral connectors on the CodeX. These connectors are on the bottom of the board in the upper left and upper right corners.

The pins of each connector are labeled on the CodeX circuit board. The “G” is the ground pin. The “S” is the signal pin (where you send the data bits). The unlabeled center pin is the 5V pin to power your pixel strip.

First, your code needs to configure a neopixel object to talk to your strip of pixels:

import board

import codex

import neopixel

import time

import random

codex.power.enable_periph_vcc(True)

# IO13 = EXP0

# IO14 = EXP1

# IO10 = EXP2

# IO11 = EXP3

# Glasses are 'GRB' with 2 rings of 24 (total of 48)

neo = neopixel.NeoPixel(board.IO13, 48, pixel_order='GRB', auto_write=False)

neo[0] = (100,0,0)

neo[1] = (0,100,0)

neo[2] = (0,0,100)

neo.show()

time.sleep

Line 7 above is very important! This tells the CodeX board to supply power on the 5V pins of the peripheral connectors. By default, the power output is turned off. It is up to you to turn the power on, and if you don’t the pixels won’t light up. I’ve pulled out many a gray hair debugging pixels when I forgot this line of code. Save your hair; remember this line!

Line 15 builds a NeoPixel object to talk to the external pixel strip. You must pass in a few pieces of information to tell the library about your pixel strip. First, you tell the library which peripheral port you want it to use. In the last blog post, we connected the glasses to peripheral port 0. That signal wire is CodeX’s board.IO13 GPIO pin.If you are using another peripheral port, use the proper GPIO pins as shown in the comments on lines 9 through 12.

Next, you tell the NeoPixel library how many pixels are on your strip. Our glasses are two rings of 24 for a total of 48 pixels.

Next, you tell the library what order the data bits must be sent in. Our pixel rings expect green data followed by red data followed by blue. That’s the pixel_order='GRB' on line 15. If your neopixels have a different order, you will need to swap the ‘RGB’ around.

Finally, we tell the library not to automatically update the pixel strip with every change we make: auto_write=False. The library keeps up with the states of all the pixels it controls. Its default behavior (auto_write = True) is to redraw the entire chain every time you change a single pixel. But we want to make several changes to the data and only redraw the strip when we are done.

The neopixel library gives us back an object with an internal list to hold all the pixel values. We access the pixel values as we would access the elements of a regular old python list – with the brackets. Lines 17, 18, and 19 write color values to the first three pixels. Pixel 0 gets red=100. Pixel 1 gets green=100, and Pixel 2 gets blue=100. Remember, values can be from 0 to 255.

Nothing actually happens to the strip until we call neo.show() on our library object. That tells the library to redraw the pixel chain. Once it is redrawn, we can make other changes to the pixel buffer. But the changes won’t be seen until the next neo.show() call.

Finally, there is a 5 second sleep at the end of the program. This is very important too! As soon as your program ends, the CodeX turns the peripheral power back off, and all the pixels will go dark. We’ll keep the program alive (but asleep) for a few seconds so we can see the pixels. We won’t need this sleep in our final code. Our final code will never end; it will run forever flashing the pixels on the glasses.

Animation: Sparkle

Now for our first animation sequence! I call it “sparkle”. One cool part of creating code is naming all the things! The “Sparkle” sequence assigns a random color to each of the 48 pixels 10 times a second. The pixels appear to, well, sparkle.

This sequence is easy:

import board

import codex

import neopixel

import time

import random

codex.power.enable_periph_vcc(True)

neo = neopixel.NeoPixel(board.IO13, 48, pixel_order='GRB',auto_write=False)

def rand_color():

return (random.randrange(0,11),random.randrange(0,11),random.randrange(0,11))

def sparkle(num):

for _ in range(num):

for i in range(48):

neo[i] = rand_color()

neo.show()

time.sleep(.1)

while True:

sparkle(40)

Lines 1 through 8 are our setup code from before.

On line 10, I’ve defined a function to return a random RGB color. This is a useful function we’ll use a lot in the code to come, so it is good to factor it into a reusable helper function. I have limited the range of each color component to 0-10, which is plenty bright and helps save battery power.

Line 13 is the sparkle function. This function takes the number of “loops” you want it to make. Each loop is a bit longer than a tenth of a second (it takes time to build the data and shift it out). Later, when we have lots of animations, we can pass in how long the “sparkle” function runs before another animation takes over.

Line 20 and 21 are our main loop. Right now we only have one animation, and we call it over and over.

This is the general pattern of our code. We’ll add new animations as functions that get called from our main loop. Now let’s make some more animations! Any ideas?

Animation: Pulse

The “pulse” animation works like a dimmer switch. The glasses are set to a random color and the dimmer switch goes up and down to “pulse” all the pixels to that color. All the pixels get brighter then dimmer, but all at the same color.

def pulse(num):

color = [0,0,0]

rc = random.randrange(0,3)

for _ in range(num):

for i in range(10):

color[rc] = i

for t in range(48):

neo[t] = color

neo.show()

time.sleep(.05)

for i in range(9,-1,-1):

color[rc] = i

for t in range(48):

neo[t] = color

neo.show()

time.sleep(.05)

while True:

sparkle(40)

pulse(4)

I am only showing the new lines code from now on. The code in your editor will grow as we add more and more functions.

Just like the sparkle function, the pulse function takes the number of cycles. A cycle is the LEDs going from off to full color and back down to off. The loop at line 4 counts the cycles. Note the variable “_”. You can call it anything you like, but since we aren’t using it anywhere, I like to call it “_”.

On line 3 we pick an LED to brighten/dim … 0 (red), 1 (green), or 2 (blue).

The loop at line 5 steps the brightness of the given LEDs from 0 to 10 (our full value).

The loop at line 11 steps the brightness of the given LEDs from 10 back to 0.

And I added the call to the main loop at line 20. Now our glasses sparkle for a few seconds, pulse a random color 4 times, and repeat over and over.

Woo hoo! These glasses are already impressive, but we can add more. Any ideas yet? What’s next?

Animation: Infinity

I really want to do some true “animations” where pixels are moving around the glasses. Imagine a single pixel revolving around the lenses in a side-ways figure-8 (the symbol for infinity). If you are facing the glasses, you’ll see the pixel start at the nose on the right lens and move clockwise around the lens back to the nose. Then the pixel jumps over the the nose and goes counter-clockwise around the left lens. And then it jumps back over to the right lens in a continuous loop.

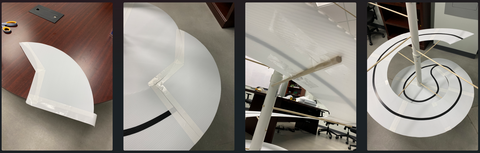

I could make this animation easier by totally rewiring the glasses as shown below:

The red letters are the existing wiring configuration. When I turn on neo[0] I am controlling the pixel near the top of the right lens shown by the red number “0”. I would rather rotate that first pixel around to where the red number “18” is. And I could have glued the left ring in that position. But there is no way I could rotate the right lens to get the counter-clockwise orientation. (Give it a try in your mind).

Instead, I’m going to make a mapping function so that my code can pretend the pixels are laid out like the blue numbers. But then the mapping function moves the data in the array around to match the physical layout shown by the red numbers.

I can use an array to define which pixel moves to where. Like this:

MAP_INFINITY = [

6, 7, 8, 9,10,11,12,13,14,15,16,17,18,19,20,21,22,23, 0, 1, 2, 3, 4, 5,

29,28,27,26,25,24,47,46,45,44,43,42,41,40,39,38,37,36,35,34,33,32,31,30

]

def do_map(data,dst,map):

i = 0

while i<48:

dst[i] = data[map[i]]

i += 1

The mapping array is defined on line 1. The actual mapping function is on line 6. The function takes data, which is our input source data (the pretend blue-number mapping above). The dst is the output array to populate (the physical red-number mapping above). We’ll just pass in the neopixel library object and let the function write straight to it. The map is the mapping array that lists where a physical pixel’s data is located in the input data. We only have one mapping now, but we can define others later (hint).

Have a look at the numbers in MAP_INFINITY. Starting with physical pixel "0", the data comes from our source array "6". Physical pixel "1" comes from the source array at index "7" and so on up to the last physical pixel "47" which comes from our source array at index "30". Check in our picture. The blue number "47" matches up with the red letter "30".

def infinity(num):

for _ in range(num):

for i in range(48):

d = [(0,0,0)]*48

d[i] = (10,10,10)

do_map(d,neo,MAP_INFINITY)

neo.show()

while True:

infinity(10)

sparkle(40)

pulse(4)

Once again, only the new things from the code are shown.

The “infinity” animation function takes an argument – the number of loops around the glasses you want the single pixel to make.

The loop on line 3 walks the pixel around all glasses from pixel 0 to pixel 47. Line 4 creates a new pixel list with all 48 LEDs off. Then line 5 sets the one target pixel to white.

Line 6 maps the source array onto the neo pixel map, and line 7 shows the pixels.

On line 10, our main loop adds the infinity animation.

See how easy the animation is once we have the pixels in a straight line in the proper order?

Other Mapping Function

Some animation ideas I have show the same pixel patterns on both lenses – copies of each other. We can use mapping functions to make these copies and mirror images of the lenses.

How about this mapping function:

Copy Flip X

Whatever you draw on the right lens is duplicated on the left lens, but mirrored on the left lens’s X axis.

Copy

Maybe a straight copy with no flipping at all:

Copy Flip Y

Or maybe mirrored on the Y axis instead of the X:

Copy Flip X and Y

Or maybe mirrored on both the X and Y axis (just to complete all flip possibilities):

Just like with the “infinity” map, we can build mapping arrays to pass to our “do_map” function. Here they are:

MAP_INFINITY = [

6, 7, 8, 9,10,11,12,13,14,15,16,17,18,19,20,21,22,23, 0, 1, 2, 3, 4, 5,

29,28,27,26,25,24,47,46,45,44,43,42,41,40,39,38,37,36,35,34,33,32,31,30

]

MAP_COPY = [

6, 7, 8, 9,10,11,12,13,14,15,16,17,18,19,20,21,22,23, 0, 1, 2, 3, 4, 5,

6, 7, 8, 9,10,11,12,13,14,15,16,17,18,19,20,21,22,23, 0, 1, 2, 3, 4, 5,

]

MAP_COPY_FLIP_X = [

6, 7, 8, 9,10,11,12,13,14,15,16,17,18,19,20,21,22,23, 0, 1, 2, 3, 4, 5,

5, 4, 3, 2, 1, 0,23,22,21,20,19,18,17,16,15,14,13,12,11,10, 9, 8, 7, 6,

]

MAP_COPY_FLIP_Y = [

6, 7, 8, 9,10,11,12,13,14,15,16,17,18,19,20,21,22,23, 0, 1, 2, 3, 4, 5,

17,16,15,14,13,12,11,10,9, 8, 7, 6, 5, 4, 3, 2, 1, 0,23,22,21,20,19,18

]

MAP_COPY_FLIP_XY = [

6, 7, 8, 9,10,11,12,13,14,15,16,17,18,19,20,21,22,23, 0, 1, 2, 3, 4, 5,

18,19,20,21,22,23, 0, 1, 2, 3, 4, 5, 6, 7, 8, 9,10,11,12,13,14,15,16,17

]

Animation: Wipe

The “wipe” animation completely fills the lenses with a single color. The wipe begins at index 0 and spreads to index 1, 2, 3, and so on up to index 23. The left lens will be a copy of the right using whatever mapping function you want from the list above.

def wipe(color,map,reps):

buffer = [color]*24

for _ in range(reps):

color = rand_color()

for i in range(24):

buffer[i] = color

do_map(buffer,neo,map)

neo.show()

time.sleep(.05)

return color

while True:

infinity(10)

sparkle(40)

pulse(4)

last_color=(0,0,0)

last_color=wipe(last_color,MAP_COPY,2)

last_color=wipe(last_color,MAP_COPY_FLIP_X,2)

last_color=wipe(last_color,MAP_COPY_FLIP_Y,2)

last_color=wipe(last_color,MAP_COPY_FLIP_XY,2)

The wipe function takes the parameter “color”, which is the background color of the glasses. The “map” is the mirror mapping you want to use. And “reps” is the number of wipes to make – the number of loops just like the other animation functions take.

Line 2 builds the initial buffer with the background color. On line 4 we pick a new random color to fill with. The loop at line 5 sweeps over the array pixel by pixel and sets the new color.

Line 10 returns the random color that the function picked. This allows the main loop to pass this color as the background for another wipe. On line 16 of the main loop, we start with the color “black”. Each call to wipe passes in the return from the previous wipe.

Fancy eh?

Animation: Wheel

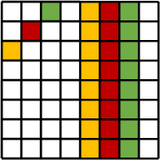

OK. This next animation is my favorite! First, the code draws a pattern of 4-2-4-2-4-2-4-2 pixels on the mirrored lenses (see below). The “2” pixel pairs are black. Each “4” pixel pattern has a single random color.

Each step of the animation pulls the first pixel off of the beginning of the list and appends it to the end. The effect is a continuous rotating wheel on the lens. And I mirror the right lens onto the left so they “turn” in opposite directions.

def wheel(num):

color1 = rand_color()

color2 = rand_color()

color3 = rand_color()

color4 = rand_color()

buffer = [0] * 24

for i in range(4):

buffer[i]= color1

buffer[i+6] = color2

buffer[i+12] = color3

buffer[i+18] = color4

for _ in range(num):

do_map(buffer,neo,MAP_COPY_FLIP_X)

neo.show()

time.sleep(0.1)

a = buffer[0]

buffer = buffer[1:]

buffer.append(a)

while True:

wheel(48)

infinity(10)

sparkle(40)

pulse(4)

last_color=(0,0,0)

last_color=wipe(last_color,MAP_COPY,2)

last_color=wipe(last_color,MAP_COPY_FLIP_X,2)

last_color=wipe(last_color,MAP_COPY_FLIP_Y,2)

last_color=wipe(last_color,MAP_COPY_FLIP_XY,2)

Lines 2 through 4 pick four random colors for the wheel. Line 6 starts the buffer with all pixels black.

The loop at line 7 adds the 4 colored “spokes” to the wheel.

Line 12 is the rolling loop. First it shows the wheel and pauses for a 10th of a second. Then line 16 gets the pixel value from the front of the list (index 0). Line 17 slices this first element off the front of the list and line 18 appends that element to the end.

Animations from the Community

What cool ideas do you have for animations on the glasses? I am excited to see what you come up with! Email me your code and we’ll share your awesome work with the rest of the CodeX community.

I’ve been thinking about a GUI to select my animations from the CodeX display screen. If I wear the CodeX on a lanyard around my neck, I can use the buttons to navigate the list of animations on the screen and pick the ones I want to show. Let me know if you have GUI ideas!

You can download the complete code from our public repository at https://bitbucket.org/firia/labs-demos/src/master/codex/neopixel_glasses/.

]]>

When I had the opportunity to dive into the subject materials, I found a space that was both open and rigid in its construction. While using CodeSpace in my classroom, I noticed that I had students who were happily frustrated with the need to be precise in language, while knowing the reason for that rigidity. Those familiar with computer languages are familiar with the necessity for proper punctuation, capitalization, spelling, and spacing, among other things. For some of my students, this was exactly what they needed.

When I had the opportunity to dive into the subject materials, I found a space that was both open and rigid in its construction. While using CodeSpace in my classroom, I noticed that I had students who were happily frustrated with the need to be precise in language, while knowing the reason for that rigidity. Those familiar with computer languages are familiar with the necessity for proper punctuation, capitalization, spelling, and spacing, among other things. For some of my students, this was exactly what they needed.

Designing an API can be magical - you make a wish, and then it comes true!

Designing an API can be magical - you make a wish, and then it comes true!

iria engineering team happened to be working on a health-thermometer project for COVID-19 screening, and I spied this nifty OLED display being tested. Perfect! These little displays are bright and crisp, AND readily available for under $10 from your favorite online

iria engineering team happened to be working on a health-thermometer project for COVID-19 screening, and I spied this nifty OLED display being tested. Perfect! These little displays are bright and crisp, AND readily available for under $10 from your favorite online